In this article I will explore how, through the use of some basic techniques using Microsoft Excel and Google sheets, every test you do can automatically feedback to your students. So read on and by the end of the article and soon you will be a feedback alchemist yourself!

I used to find myself boiling with frustration at my students as they ignored my comments on their assessments when I returned them. Lesson time would be devoted to going through the test, carefully explaining where marks had been lost and what the correct answers were. With the time constraints most teachers are under, it is very difficult to give students one-to-one feedback on every aspect of a test. The best I could do “Lesson time would be devoted to going through the test.”was a general run through for the whole class and my hope that the students listened. They seldom did. Many of them would become frustrated with me too. A cluster of students that all got full marks on questions would complain loudly that I was wasting their time going through it in class. To be honest, they had a point.

Giving students feedback and grades usually results in less progress than giving them the feedback alone (Lipnovich & Smith, 2008). My experience reinforced this notion as I had repeatedly witnessed students look at their grade or percentage for a test and then switch off. It was therefore important to me to find a way to engage them and also personalise how I fed back. The problem was that the tests only generated a number. Anything more would be my subjective comments. Was there a way to turn summative into formative?

In 2007 I attended some CPD which showed a simple spreadsheet that analysed the Year 9 SATs for Maths on a question by question basis. On the spreadsheet, the number of marks a candidate scored for each question in the paper were itemised and then the cumulative total for each topic calculated. The aim of this document was for a department to reflect on its teaching and to identify any areas for development. It seems logical to assume that if every student is struggling with a particular topic then how this topic was delivered may need to be re-evaluated.

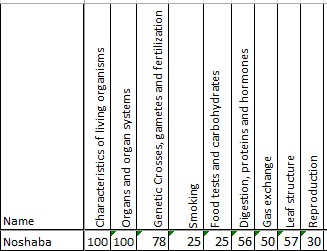

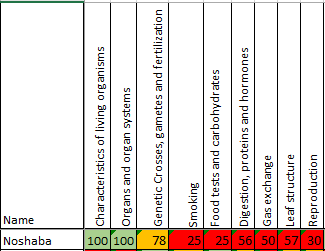

This was a positive first step but it was still of very little use to the student. So began the process of experimentation with Microsoft Excel! Pretty soon I had some rudimentary prototypes using Year 11 Mock exams as a starting point. By deconstructing the Mock paper, question by question, it was possible to determine the number of marks available for each topic area. The actual data from the students was then added and the percentage of the available marks obtained calculated. This meant I was able to say to Noshaba “Hey, you did really well on the organs questions but you got less than half the marks on food tests.”

Though pleased with this I was convinced that there was more that could be done. I was still having to sit down after the exam and manually enter lots of data. Allied to this was the fact that I was giving the students raw percentages that needed explaining. Hardly very labour-saving!

Although dissatisfied I felt there was still something to the idea so the playing around with Excel continued. After some advice on how to use conditional formatting to highlight cells with different colours depending on their content, the students could automatically see their areas of strength and weakness. This was a very simple yet effective way to communicate the information. I thought I had cracked it.

I thought wrong.

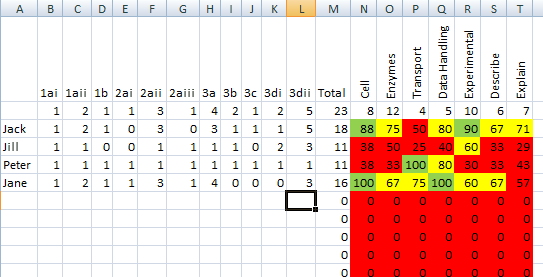

Although the students could now see where they were ‘red’ and where they were ‘green’, they were failing to make the next step. Something more was needed. It was around this time that I also realised that I was being incredibly narrow in terms of what was being counted. Sure, the topic by topic breakdown gave some indications of understanding, but I had not been measuring skills in the slightest. Luckily this was fairly simple to rectify; all that I needed to do was re-examine the Mock paper and allocate a skill for each question. For example, some questions were straightforward subject knowledge. Others may have required calculations or the interpretation of data. It was even possible to hone in on the perennial thorn in the Science teacher’s side: describe and explain command word questions.

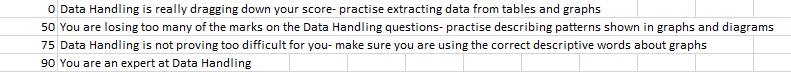

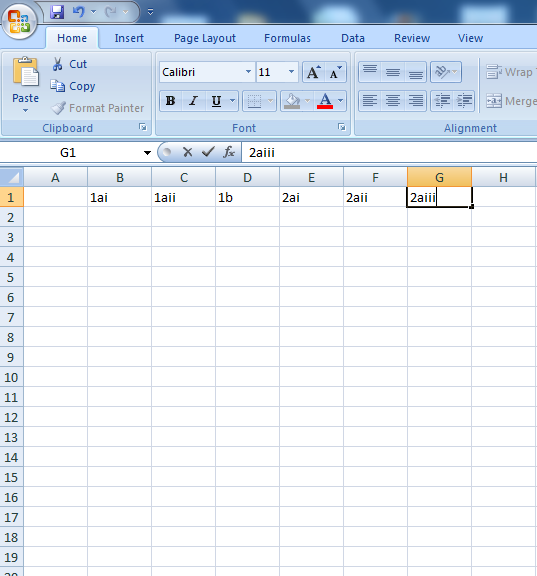

The final step in the saga was my exploitation of Vlookup tables. These are scary but surprisingly simple beasts to master. Simply put, what they do is look at the contents of one cell and then compare it to a table elsewhere on the spreadsheet. Most teachers will use these for changing percentages into grades or levels on their tracking markbooks. I took this a stage further. If this function could turn a percentage into a grade why not turn a percentage into a comment?

Through some trial and error a spreadsheet was constructed that was able to take the raw data from the tests and convert it into a series of comments that could just be printed out and given to the students. They received a whole page of detailed feedback which was specific to every topic area of the curriculum as well as every skill area examined. Each feedback sheet was specific to that student. This closed the gap between the students knowing where their weaknesses were and the action they needed to take in order to improve. So, for example, instead of Francesca knowing that she had only got 67% of the marks for enzymes questions, she would have feedback telling her that she should ‘Practise these sorts of questions and see what examiners are looking for in markschemes’.

The diagnostic tests were rolled out across the department. Many staff were happy with just being able to highlight areas of strength and weakness to their students, they chose not to make the final step to automatically generated comments, but this was still far superior to what had gone before. One of my staff developed the innovation so that the spreadsheet would also generate a pie chart or bar graph for each student, so that they could evaluate their performance visually which was welcomed by many.

One flaw still lingered - the sheer time it took to input the data. Even for the smaller ‘end of topic’ tests we were using the spreadsheet for, it could still take an hour to enter the necessary information to make the sheet work. To combat this, there were a few low tech workarounds. One way was for the students “Students would shout their scores out and have the teacher type them in.”to enter the data themselves on my computer during a lesson. Two at a time would come up and work away whilst the rest of the class were engaged with a different activity. This worked well but there was still the nagging guilt that students should not be using precious lesson time, even if it was only five minutes, to do data entry. An alternative was to get them to shout their scores out and have the teacher type them in. Again the rest of the class would be engaged in an activity whilst the teacher made their way through every student on the register. The problem of misused lesson time still spoiled what was a cracking little innovation.

Finally, the issue was solved using Google Docs. The template for the diagnostic spreadsheet would simply be uploaded and the students sent the link. They would then enter the data from home. This meant that the data entry could be set as a short homework, and the students would still have access to the rich feedback.

There are a few aspects that need to be considered before you start using the diagnostic spreadsheet method to give your students feedback:

1) Time investment Vs reward. Each spreadsheet will take about an hour to make. This is a worthwhile investment if lots of students are going to take the test and therefore benefit from feedback. However, if there are fewer than 20 students it would probably be quicker to just give them verbal feedback. At my school we had common assessments that the whole year group sat. This meant that approximately 140 students would benefit from the hour spent making a spreadsheet. Well worth it. A good way to make the time investment smaller is to divide the work so each member of staff does one spreadsheet and gets the use of ones made by the result of the team. Putting in a single hour of work and then getting the benefit of the access to five sheets is a much better pay off.

2) The expertise level of your staff. Some staff are comfortable with Excel and will find the work I have described easily accessible. Others may be insecure using the software or lack the required skills to use it effectively. Do bear this in mind when introducing this kind of innovation to your staff.

3) Consider the skills of your students. We avoided using this innovation with any students younger than 14. Though possible, I would have to seriously think about whether the students would have the maturity to handle such an intricate task. Even with the older students it would be wise to take the time to review what the point of the exercise is. Once the student realise that they are the ones who will benefit from the activity they will be much more inclined to both take it seriously and use the advice.

4) Use it as a reflective tool for the teachers. This goes full circle back to the original use of diagnostic spreadsheets that I saw at the CPD session all those years ago. It is insightful to see where your class is doing well and less well as a group. This enables you to focus revision but also adapt the teaching of certain aspects of the curriculum as needed. On a departmental level it was awesome to be able to see who taught specific topic most effectively. Moving forwards these members of staff could share how they had approached the teaching with their colleagues. This targeted sharing of good practice is especially useful.

To summarise, diagnostic spreadsheets are a great way to turn summative into formative feedback. They take a while to get a handle on and they do require a certain level of ‘front-end’ effort but the benefits far outweigh the initial investment in time. When I embarked upon this journey of discovery I had novice level of skill and was much more of a consumer than a creator of spreadsheets. If I can do it I am sure anyone can.

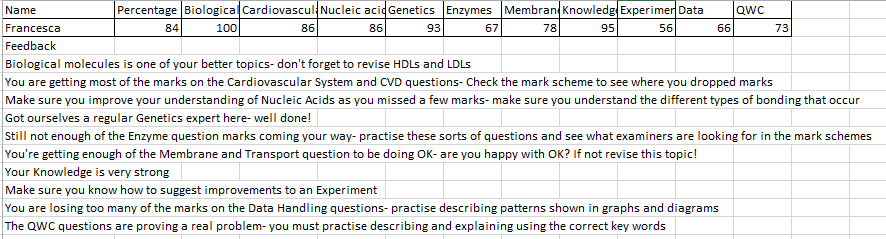

Here’s a step-by-step guide to creating a very basic diagnostic spreadsheet. Contact me if you would like the more advanced templates.

How to make a diagnostic spreadsheet:

1. Get a printed copy of your test paper and open Excel. You may create from scratch or use a template.

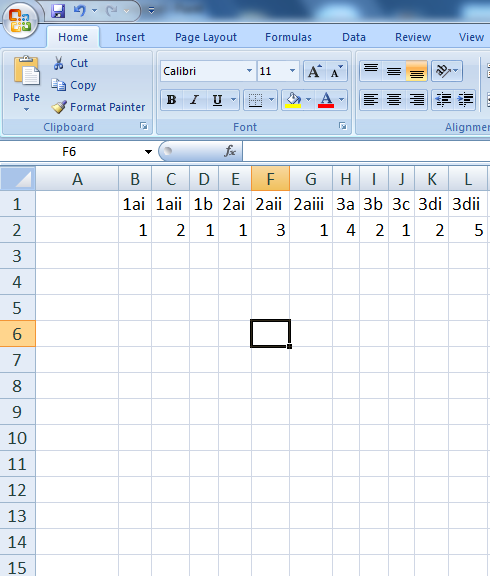

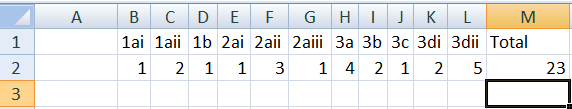

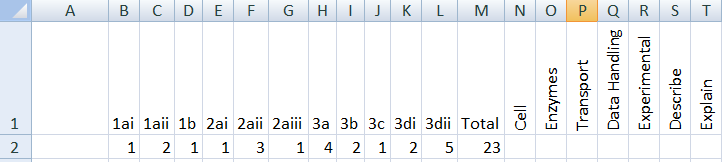

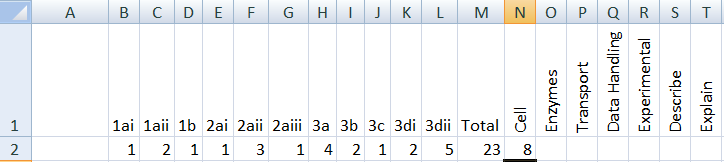

2. Assuming you are working from scratch leave the first column blank. Then add the question numbers into each column write the number of each question including sub-parts of questions.

3. Once you’ve added all of the question numbers add in how many marks each question is worth in the row underneath.

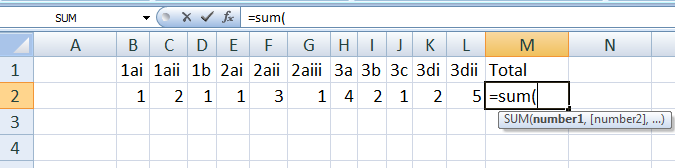

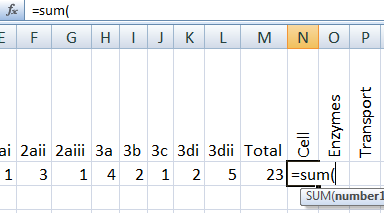

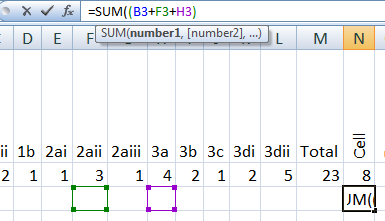

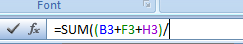

4. Now add a total column at the end. To make it calculate the total type in =sum(

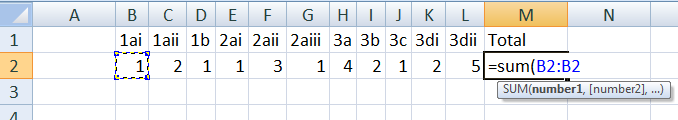

5. Then click onto the first mark you have entered and press the colon button on the key board:

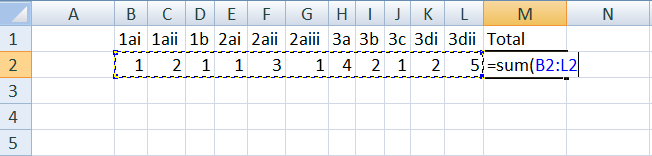

6. After you have typed in the colon click on the last mark you have entered and add a bracket to close the instruction.

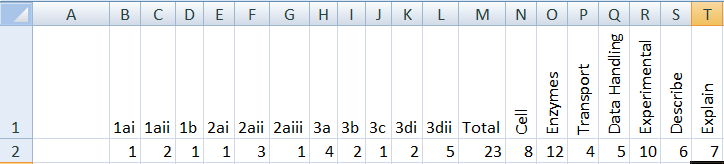

7. You should now have the total of all of the marks available.

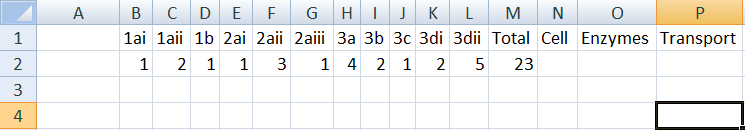

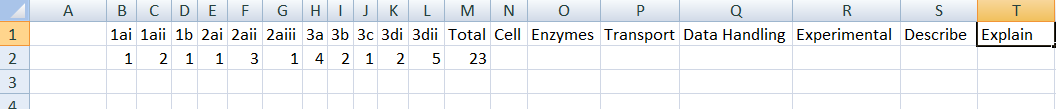

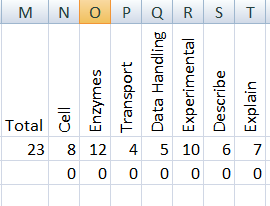

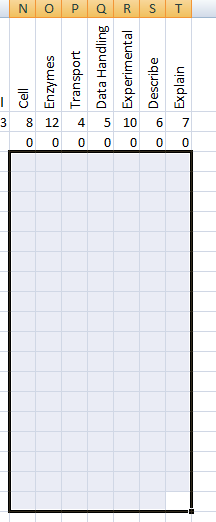

8. For the next stage you need to look at the test and split it into topic areas. 3 or 4 should be enough for most of our end of unit tests. Write these into the columns after the total.

9. Then add some skills areas- you can use question command words here too.

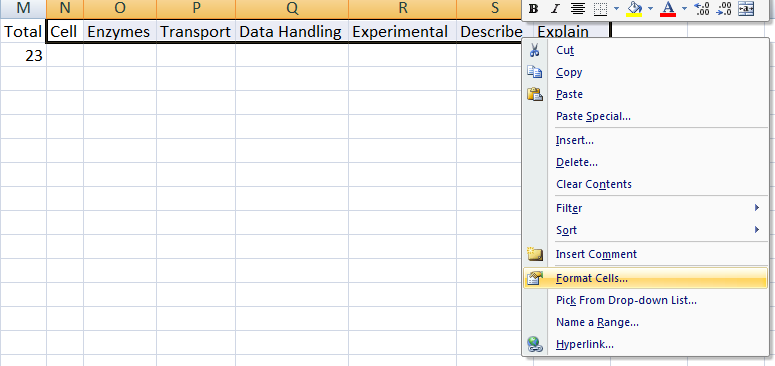

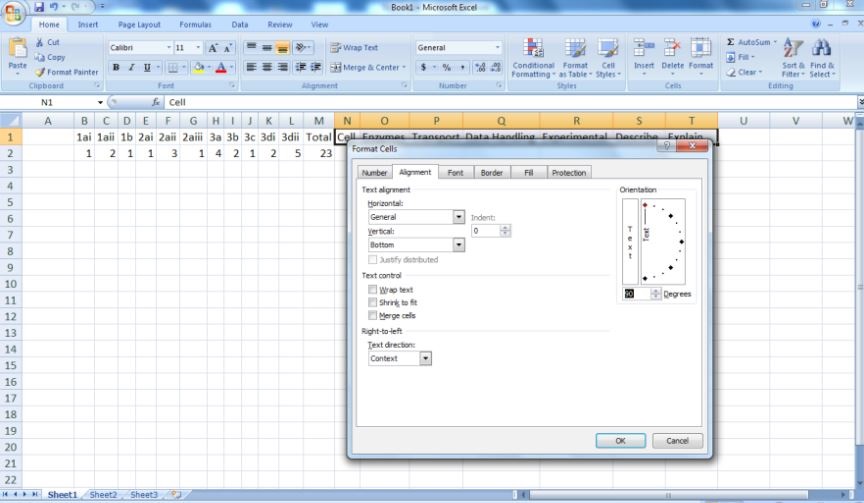

10. To make the spreadsheet more manageable select the columns with name labels and then right click select format cells.

11. Select the alignment tab and align the text at 90 degrees.

12. Now drag the topic and skills columns to make them narrower.

13. Select the cell underneath your first topic and type in the following:

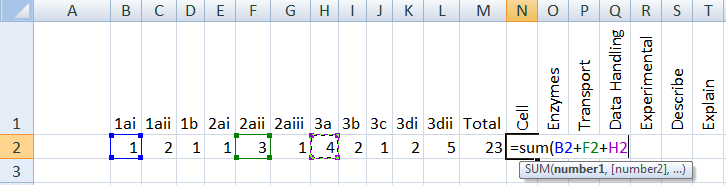

14. Now click on the marks for any questions on that topic adding a plus sign between each.

15. Close the brackets and press enter. You should now have the total marks available for that topic in the question paper.

16. Repeat this for each topic and skill area.

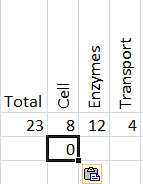

17. Select the cell containing your total of your first topic. Hold down the CTRL button and press the C button. Now select the cell immediately beneath. Hold down CTRL and press the V button this time.

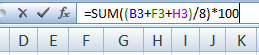

18. You should get a zero. This is good. Now add another bracket into the formula box between the word sum and the first bracket.

19. At the other end add a forward slash at the end of the formula.

20. Now add the total number of marks available for the topic. In this case the total number of marks available is 8. Then close the bracket and add an asterix (on the 8 button if you hold down shift) and the number 100.

21. Press enter. The cell should stay as a zero.

22. Repeat this process for each of the topics and skill areas.

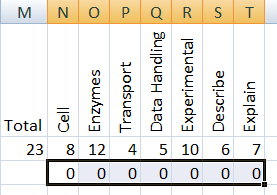

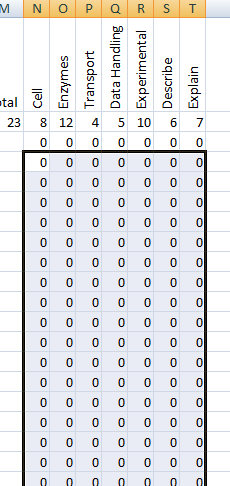

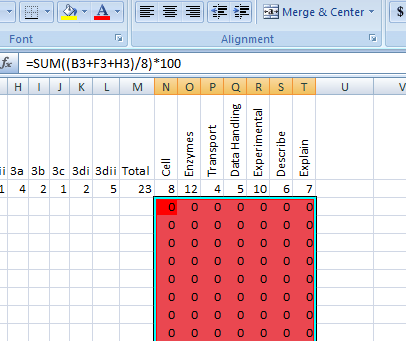

23. Now when you fill the marks in for each pupil you will get the % of the marks they got for each topic in these columns. Select the cells in the row you have just created, hold down CTRL and press C.

24. Highlight about 20 rows underneath the one you have just made then press CTRL and V at the same time.

This should happen:

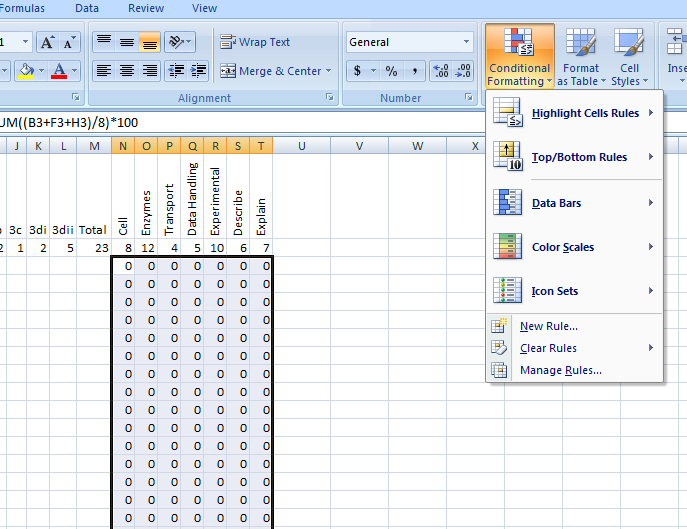

25. Select all of the cells you have just made and click on the conditional formatting button.

26. Click on the highlight cell rules and then the greater than button.

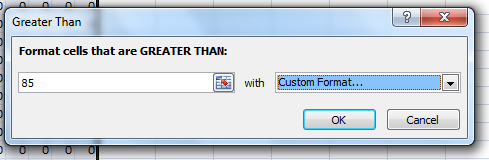

27. In the pop up window choose the upper boundary. This might be uniform across the subject. This % should be high enough to reflect good performance. In this case I have chosen 85.

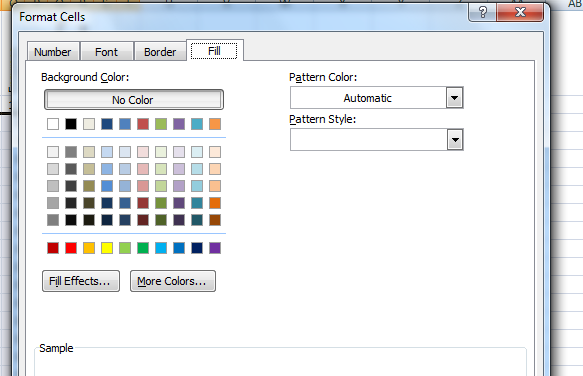

Then click on the other box and select custom format from the drop down window:

Select the ‘fill’ tab and click on green.

28. Click ‘OK’. Now any cell with a percentage higher than 85 will automatically turn green.

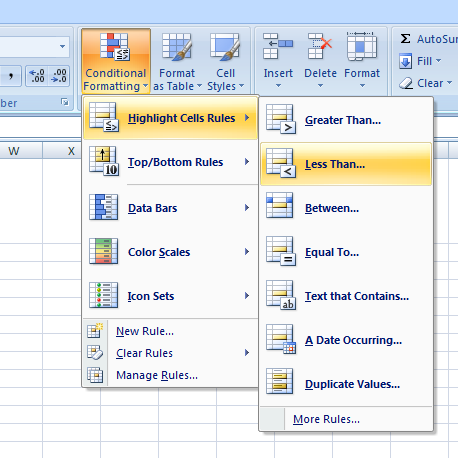

29. Repeat the procedure but this time after clicking the conditional formatting button select the ‘less than’ button.

30. This time choose a percentage under which you would be concerned. Again this might be something decided across the subject area. I’ve used 60. This time select custom format in the second box but choose red as the fill colour. Click ‘OK’. All the boxes will go red. This is good.

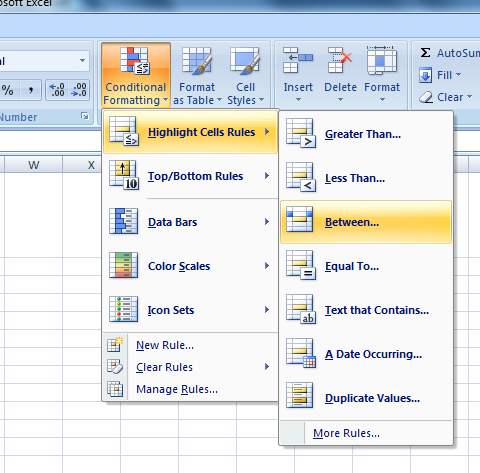

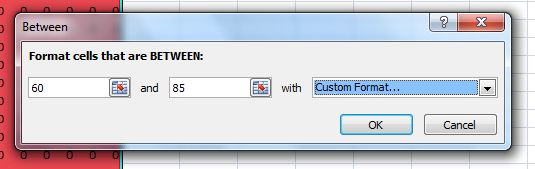

31. What about the pupils that get a percentage between 60 and 85 I hear you ask. Well we have a tab for these too. Click on the conditional formatting button. Select highlight cells and then the ‘between’ button.

32. Select you low value for the first box and you high value for the second box. Again click on the other box, go to custom format and select ‘fill’ choose amber this time. Click OK.

33. Nothing will happen. This is good.

34. Add the names of the pupils into the left hand column. Now add the marks they got for each question. The cells containing the percentages for each topic should change colour. This is good.

35. You will now be able to see very easily where each pupil is struggling.

Let us know how you get on below!