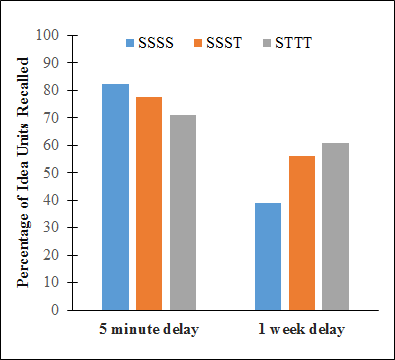

It appears that testing is more than an object of stress, and many studies suggest testing supports memory retrieval crucial to excelling in certain subject areas. Consequently, the testing effect“The testing effect goes against popular thinking.” goes against popular thinking as a pedagogical tool. For instance, it is highly likely that pupils are encouraged to study a lot in preparation for an assessment. This is part of our teaching and learning DNA. However, Roediger and Karpicke (2006) propose a different picture. They conclude that pupils made more progress with frequent testing then relying on studying.

So, instead of a traditional model of study, study, study, study then test. We should consider a new model with testing at the core – study, test, test, test then test.

Figure A: STTT table Roediger and Karpicke (2006)

Figure A: STTT table Roediger and Karpicke (2006)

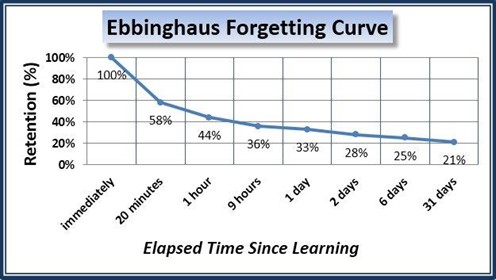

This does not suggest, however, that pupils are tested daily over the same material. A number of studies have shown that tests have more impact when they are spaced out. This can take advantage of forgetting. But why would we encourage forgetting? Learning is about remembering.

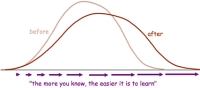

The Forgetting Curve

Hermann Ebbinghaus - infamous author of the Ebbinghaus Forgetting Curve - theorises how the curve demonstrates the rate in which we begin to forget knowledge. Initially the forgetting process is rapid, with the loss of 67% of learning in the first day and the slowly declines each day after. This explains why, the next day, many pupils in your lesson may look blank when you start asking questions about the last lesson. Don’t become disheartened however; use this to your advantage.

Figure B: Ebbinghaus Forgetting Curve table

Figure B: Ebbinghaus Forgetting Curve table

Your intention is to make the remembering more difficult. By spacing out the test, you are using the forgetting curve to your pupils’ advantage. Testing at longer intervals can further enhance the retrieval process (McDaniel and Agarwal, 2011). Conveniently, this is known as the Spacing Effect. The more effort that a pupil uses to recover the knowledge, the more they improve their retrieval strength for future use. Yes, the forgetting curve will set in-motion quickly after the test, but it slows the memory decay when regularly tested.

Usually, pupils tell us that they see no point in revising too early because they will just forget. And technically, they are correct - they will. Nonetheless, the difference for a pupil who spaces out low-stakes testing in the run up to their examination will have stronger retrieval strength. And on a timed test, that can be a huge advantage to maximise points.

However, countless pupils and adults still prefer the old fashioned ‘night before cramming’ approach - which does have its benefits, but only as a short-term fix. Spaced out, low-stakes testing, meanwhile, improves long-term memory, resulting in you actually learning.

More interesting is that low-stakes is more than just testing facts and figures to improve retrieval strength. Butler (2010) “You don’t prepare for a marathon by running a daily marathon.”suggests that low-stakes may also improve knowledge transfer, eg recognising common concepts or skills, or identifying patterns between different subjects. Most pupils will find this difficult, as will adults. I will regularly highlight links between subjects, which is usually followed by a chorus of “but this is History, not English!” However, the ability to make more links creates a richer understanding of a wide base of knowledge. The ability to make cross-curricular links between skills only serves to benefit the pupil.

But what impact does it have on teachers?

Firstly, low-stakes testing is a low-intensity, high-impact strategy. Initially there is work in creating the tests, yet the input is no different than current planning. The difference is that the quizzes are reusable year after year - considerably cutting teacher workload.

Secondly, including more testing does not disrupt the curriculum; it enhances it. They can be quick and easy to mark, and often provide a wealth of information - thus tackling pupil gaps in knowledge, misconception, or a lack of understanding. Careful mapping of quizzes in schemes of work is needed to take advantage of the space effect. But it is merely signalling where new tests need to be taken, not a long process of rewriting the curriculum.

Thirdly, the use of more tests does not impact on a teacher’s style of teaching or any school’s lesson format. Instead, it enhances the everyday nuances of teaching. Information that tests provide can inform teachers on the types of questions to consider asking pupils, resources to use to scaffold learning, or the best type kind of pupil feedback. More testing is not a bolt-on; it’s an effective, formative activity to improve teacher planning and pupil learning.

How does this translate into classroom practice?

Low-stakes testing can take many different forms of quizzes. However, not all quizzes are created equal!

Typical example types of quizzes used in the classroom include:

1. Short answer questions

2. Multiple-choice questions

3. Fill in the blank

4. Matching

5. True or False

However, the most effective of the list are short answer and multiple-choice question tests and the least reliable, and one that I would not recommend, is true or false tests.

1) Short answer questions

They are quick to write and easy to mark. Short answer tests are open-ended questions that force pupils to independently think back into long-term memory to retrieve answers. The more difficulty placed on the processing of an answer, the greater beneficial effect it has on memory (retrieval-effort hypothesis). The expectation is short answers from one piece of work to short sentences; in some cases they make expect listing or a few lines. Wording of the questions needs be carefully constructed. Although they will be open-ended, you want to encourage explicit answers.

Example short answer questions:

- Identify three possible factors for the start of World War 1.

- According to Newton’s second law, what causes acceleration?

- In Romeo and Juliet, why is Lord Capulet determined that Paris will marry Juliet?

- Explain how the rainforest supports local populations.

- Discuss how the heavy use of social media can impact the user’s mental health.

(Avoid questions that are too broad)

2) Multiple-choice questions

Multiple-choice questions (MCQs) tests can have many benefits. They are easy to mark. They can cover a range of content, and test a wide range of higher-order thinking skills. However, there is an art to writing them. Make sure the distractors are plausible.

Example of a MCQ with plausible distractors:

What is the capital city of Oman?

a. Doha

b. Muscat

c. Manama

d. Ankara

Again, but this time, with weak distractors:

What is the capital city of Oman?

a. London

b. Muscat

c. Tokyo

d. Paris

The difference is clear. The first example challenges a pupil’s long-term memory in considering the answer. Whereas the latter, meanwhile, does not really tell us whether the pupil really knows the capital of Oman or not.

To conclude

Tests don’t have the greatest reputation, but their impact on pupil learning should be reconsidered. The prevailing use of heavy summative assessment is unhelpful; not only for teacher workload, but also for its reliability in what the outcomes are telling us, and the limited intelligence that can be analysed surrounding this.

I see little use for using summative assessment to have a full test every half term, or marking in-class activities with a GCSE grade. In a recent assessment conference, Daisy Christodoulou summed this up with a perfect marathon analogy. She asked “How would you plan for a marathon?” Not by running a daily marathon, or by testing everything you do against marathon time expectations. Instead, you would create a plan to prepare yourself, and test your progress in relation to the gains made during those activities in preparing for the race.

More testing is not as scary as it sounds. Tests need to be used more effectively as a low-stakes format. Science is showing us that it can have a positive effect on pupil learning. Therefore, adding additional tests to our teaching toolbox could enhance pupil learning, all the while cutting down on teacher workload.

References

- Butler, A. C. (2010). Repeated testing produces superior transfer of learning relative to repeated studying. Journal of Experimental Psychology: Learning, Memory, and Cognition, 36, 1118–1133.

- McDaniel, M. A., Agarwal, P. K., Huelser, B. J., McDermott, K. B., & Roediger, H. L. (2011). Test-enhanced learning in a middle school science classroom: The effects of quiz frequency and placement. Journal of Educational Psychology, 103, 399–414.

- Roediger, H., & Karpicke, J. (2006). The Power of Testing Memory: Basic Research and Implications for Educational Practice. Perspectives on Psychological Science, 1(3), 181-210.

Want to receive cutting-edge insights from leading educators each week? Sign up to our Community Update and be part of the action!